The rise of CRISPR Cas genome editing has reshaped the landscape of modern biomedicine. Once a conceptual tool borrowed from bacterial immunity, CRISPR is now driving real clinical interventions and transforming the therapeutic possibilities for genetic disease. Yet, with such power comes significant responsibility. The ability to precisely modify the genome demands assurance that each edit occurs exactly where intended, and nowhere else. Unintended genomic alterations, known as off-target effects, remain one of the most important safety challenges in translating CRISPR technologies into the clinic.

For CRISPR-based medicines to advance safely and effectively, off-target analysis must be both comprehensive and scientifically rigorous. As the regulatory environment evolves and therapeutic programs mature, a multidimensional, orthogonal approach to assessing off targets has become a foundational expectation.

Shaping the next era of gene editing requires a deeper understanding of why off-target evaluation matters, how current methodologies measure up, what best practices are emerging and where CRISPR safety assessment is headed.

The stakes: why off-target effects matter

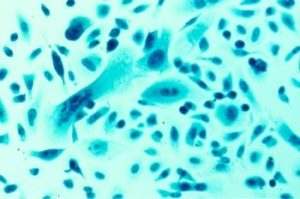

The power of CRISPR Cas systems lies in their precision: programmable nucleases allow researchers to target specific genomic sequences for modification. But even rare cuts at unintended sites can pose serious clinical risks. Off-target activity can disrupt essential genes, introduce unwanted mutations or generate secondary pathologies. Robust assessment of such events is not only a scientific best practice; it is a regulatory requirement.

Moving a CRISPR therapy into human trials requires demonstrating a comprehensive understanding of where genome-editing tools act, including low frequency edits that would be undetectable by less sensitive methods. This expectation marks a shift from traditional efficacy focused evaluation towards a much broader safety-centered framework.

The complexity of off-target detection

One of the major challenges in CRISPR safety evaluation is that no single method provides a complete picture of off-target activity. Instead, researchers must navigate a complex ecosystem of technologies, each with its own benefits and limitations.

- In cellulo assays: these cell-based approaches often offer the highest true positive rates because they capture genome-editing activity in a biologically relevant environment. However, these assays may not perform well in sensitive or hard-to-edit cell types, and can face challenges representing diverse genomic contexts

- In silico prediction: computational tools can rapidly scan the genome for sequences resembling the intended target. While efficient and broadly applicable, these methods suffer from high false positive rates due to their inability to model biological activity, chromatin state or enzymatic dynamics

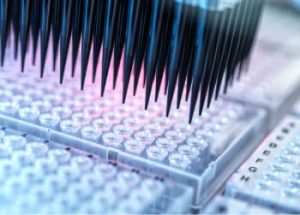

- Biochemical in vitro assays: highly sensitive biochemical assays can identify potential cleavage sites in purified DNA. These methods excel in sensitivity but often produce false positives since they do not replicate cellular conditions.

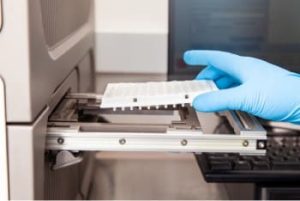

Across all off-target methods, detection is heavily dependent on next-generation sequencing (NGS). Variability in genomic DNA input, sequencing depth, error profiles and library preparation workflow can introduce significant inconsistencies between samples. These operational hurdles highlight the critical need for standardized, comprehensive methods for off-target assessment.

The case for an orthogonal strategy

Recognizing that each off-target detection method has inherent blind spots, the field is shifting towards multi- method, orthogonal evaluation strategies. By combining approaches that complement one another, researchers can better identify true off-target sites and minimize the risk of missing clinically relevant events.

A notable example comes from work at the Children’s Hospital of Pennsylvania, US. In developing an individualized in vivo base editing therapy (‘N of 1’), the team relied on an integrated strategy that combined in vitro, in silico and in cellulo off-target nomination. The assessment incorporated the patient’s specific genome sequence and prioritized sites using known true positive rates from different assay formats. This combined analysis enabled informed, risk-based decision-making before moving towards clinical intervention [1]. Regulatory agencies have taken note. The US Food and Drug Administration’s recent draft guidance explicitly recommends multiple orthogonal methods for off-target nomination to ensure that no single method’s weaknesses compromise overall safety [2].

In off-target analysis, it is typically safer to overestimate potential off-target sites than to underestimate them. Even when initial nomination uses highly expressed or unoptimized nucleases that are more prone to off-target cutting, these sites can later be evaluated under clinically relevant conditions. A site edited in a high expression system may show no editing when a low dose, high fidelity nuclease is used in primary cells. Identifying the site early—and confirming its absence later—helps mitigate risk and build confidence.

Current gaps in off-target analysis

Despite advances in assay development and sequencing technology, gaps remain in the field’s ability to detect and characterize all off-target outcomes:

- Structural variants remain challenging: CRISPR editing, including base editing, can produce large deletions or chromosomal translocations—events that often escape detection using short-read NGS. Available approaches to detect these outcomes exist but remain limited and insufficiently validated

- Limitations of short-read sequencing: most nomination and validation methods rely on short-read NGS, inherently restricting the ability to map large or complex genomic alterations. Long-read sequencing technologies are being explored as a solution, but widespread adoption requires improved analytic frameworks and validation

- Unbiased methods for new editing modalities: as base editors and next-generation CRISPR systems evolve, new nomination methods—such as CHANGE seq BE—are being investigated to assess whether existing off-target tools adequately capture the unique footprints of these newer technologies [3].

Building towards standardization and measurable confidence

A major development shaping the future of CRISPR safety is the push towards standardized analytical frameworks. The field is gradually coalescing around the need for well-characterized genome editing standards that allow researchers to clearly express confidence levels such as:

‘We are X% confident this method detects Y% of all off-targets down to Z% editing’

Efforts led by consortia—such as the Genome Editing Consortium at the National Institute of Standards and Technology—are playing a central role in establishing these benchmarks across diverse editing contexts.

As standards evolve, they will provide a much-needed foundation for regulatory alignment. The field will increasingly benefit from consistent expectations for risk evaluation, similar to established frameworks used in oncology or hereditary disease diagnostics.

The path forward: safely unlocking CRISPR’s potential

CRISPR is rapidly advancing from research tool to therapeutic platform, bringing unprecedented potential to treat previously incurable genetic diseases. But the technology’s future hinges on our ability to evaluate and mitigate off-target risks with precision and clarity.

The future of off-target analysis will be shaped by:

- Improved biological and library preparation workflows that lower detection limits and increase confidence

- The development of sophisticated nomination and validation assays tailored to emerging gene-editing modalities

- Expansion of long-read sequencing and analytic capabilities

- Increasingly rigorous, globally harmonized standards

With these advancements, researchers can more confidently identify true off-targets, interpret their potential biological consequences and guide clinical decision-making. Ultimately, comprehensive and orthogonal off-target evaluation is not just a technical necessity; it is the cornerstone of patient safety and clinical success.

As genome editing continues to mature, such rigorous approaches will ensure that the full promise of CRISPR can be realized safely, ethically and effectively.

Originally written for European Biopharmaceutical Review, Spring 2026, pages 47-49. © Samedan Ltd.